Schema Is Necessary, Not Sufficient: When Technical SEO Excellence Loses to Authority

The Year One Result That Reframes Everything

A mid-sized Registered Investment Adviser we worked with built a technical SEO foundation that, on every audit dimension, peer-matched firms many times its size. Schema architecture using the @graph plus @id linked-entity pattern. Twelve AI bot user agents explicitly enumerated in robots.txt. An llms.txt that exceeded the structured comprehensiveness of two of three peer firms audited. Server-side rendering passing on every page tested. Hundreds of new keyword rankings earned during the build year. Citation share across AI engines that, on positioning-niche queries, exceeded the firm’s own name recognition.

Twelve months in, total organic clicks were down 9% year over year. A competitor with no documented schema investment, no llms.txt, and a lower Domain Rating outranked the firm on its own registered trademark. Position 1 on a portfolio of high-intent local commercial keywords produced zero clicks across a six-month window. The firm had built the strongest technical SEO foundation in its competitive set and earned the weakest commercial outcome.

This is not a case study about execution failure. The execution was, on every measurable dimension, peer-leading. It is a case study about the limits of technical SEO as an investment thesis in YMYL (Your Money or Your Life) verticals. The thesis was that infrastructure quality could substitute for accumulated authority. The data settled the question. It cannot.

What Schema Architecture Actually Does

Schema markup, structured data, llms.txt, AI bot enumeration, and the rest of the modern technical SEO toolkit do real work. The work is narrow.

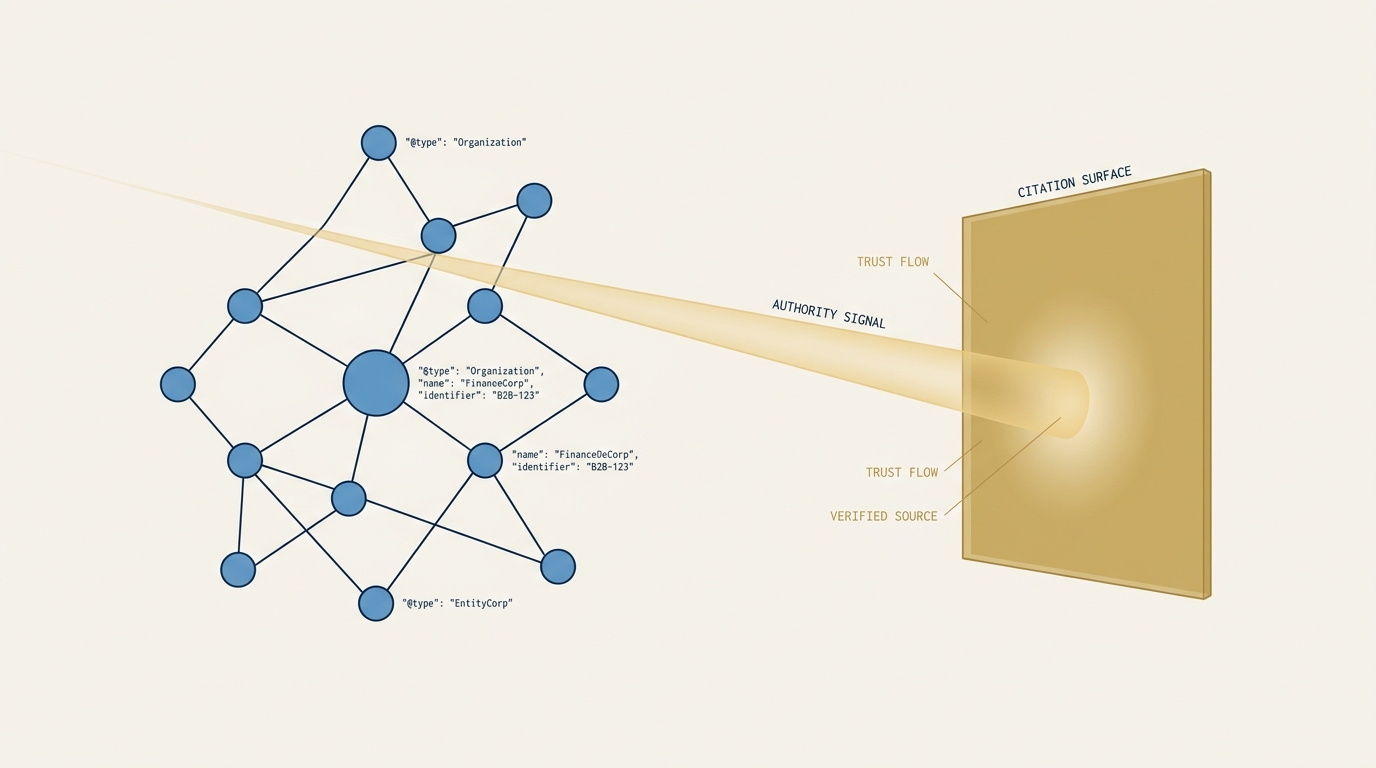

Schema makes a page parseable. It tells crawlers (classical and AI) what the page is about, who authored it, what entities it references, and how it relates to other pages on the site. Without schema, a page is text the crawler has to interpret. With schema, the same page is data the crawler can extract. The difference is real and measurable in eligibility for rich results, AI citation, and entity-graph inclusion.

AI bot enumeration in robots.txt and a current llms.txt make a site addressable. AI crawlers that respect these signals know what they are allowed to read and where the answer-extraction surface lives. Server-side rendering ensures that the rendered content the crawler receives matches the content a human user sees, which closes a category of failure where client-side-rendered pages effectively hide their content from non-JavaScript-executing crawlers.

These are the floor. They are not the ceiling. They are the conditions under which a page is eligible to be considered. They do not determine whether it is selected.

What Authority Actually Does

In YMYL verticals (finance, health, legal, insurance, anything where bad information can hurt someone), AI search engines and Google’s classical ranking systems both apply an authority threshold that is materially higher than in low-YMYL categories. The threshold is not a single signal. It is a composite that includes:

- Backlink composition. Not raw backlink count, which Domain Rating measures. The qualitative composition of who links to the site. Successful YMYL sites have backlink profiles concentrated in category-authoritative outlets. A wealth-management firm whose backlinks come from financial press (Barron’s, WSJ, Forbes, Bloomberg, FT, CNBC, NerdWallet, Investopedia) has an authority signature that an AI engine can use as a citation prior. A firm whose backlinks come from generic SEO directories does not, regardless of raw count.

- Named-byline placement. Content the firm’s principals have authored, under their names, in third-party outlets the AI engine recognizes as authoritative. This is the mechanism by which an entity becomes “trusted” in a citation graph. It is not substitutable.

- Awards and recognition. Barron’s Top 100, Forbes Best-in-State, industry-recognized rankings. These produce a category of citation that AI engines weight heavily because the recognition itself is the citation signal.

- Editorial coverage. Named features in tier 1 and tier 2 outlets. Not link-building outreach. Earned editorial mentions.

These signals compound over time horizons measured in years. A firm whose first Barron’s Top 100 recognition arrived in year 8 of its existence is on a typical authority trajectory. A firm at year 12 with no such recognition is unusual, and the absence shows up in AI citation behavior on commercial-intent queries.

The schema architecture I described in the opening case does none of this work. It does the parsing work. It does not, and cannot, do the authority work.

The “Crawled, Currently Not Indexed” Verdict

The single most diagnostic piece of evidence in the case I opened with was Google’s own treatment of the published content. Across an audit of more than 130 URLs (pillar pages, sub-pillars, and a representative blog sample), only about 11% were cleanly indexed. More than 40% of the audited URLs had a “Crawled, currently not indexed” classification or were not indexed at all.

The reason this verdict matters more than any schema or technical finding is that it represents Google’s own quality assessment, made after the crawler successfully reached the page, after the canonical was correctly declared, after the rendering was server-side, after the schema validated, after the mobile usability was confirmed. The crawler arrived, evaluated the page, and chose not to include it in the index. The status code “Crawled, currently not indexed” is Google’s standard signal for content that the crawler reached but considered below the threshold for inclusion.

This is the load-bearing finding for the argument that schema is necessary but not sufficient. The technical execution layer worked. The content quality layer, the authority signal layer, and the entity recognition layer did not clear the threshold. No amount of additional schema improves the verdict. Schema is the input to the eligibility test. The eligibility test is a different test.

Why #1 Rankings Now Produce Zero Clicks in YMYL

The other diagnostic finding from the case is a pattern that almost every YMYL site is now confronting. Position 1 organic rankings on high-intent commercial queries produced zero clicks across a multi-month window. Not low CTR. Zero. On queries with hundreds to thousands of monthly impressions.

BrightEdge’s One-Year Mark Report from September 2025 found that finance has the lowest AI Overview / top-10-organic overlap of any vertical at 11%, meaning a finance site ranking #1 organically is the least likely of any vertical to be cited inside the AI Overview that sits above it on the page. Conductor’s 2026 AEO/GEO Benchmarks Report documented that AI Overviews now appear on approximately a quarter of finance queries and that within the queries that do show AIO, the educational and planning sub-categories see AIO presence above 60%.

The structural mechanism is the SERP environment doing what its design now requires it to do. A user searching a commercial query encounters an AI Overview answer at the top of the page, a “People Also Ask” block beneath it, a knowledge panel for the dominant entity, possibly a Map Pack, possibly a featured snippet, and only then the position 1 organic result. The organic result’s visibility shows up as impressions in Search Console. The click does not, because the user’s question was answered above the fold.

No amount of schema improvement changes this. The page is being shown. It is not being clicked. Schema makes the page eligible. Authority signals determine whether the page is cited inside the AI surface that captured the click.

The HubSpot Cautionary Case

The argument I am making is symmetric. Authority dominance does not save sites that lean too far into volume content without authority underpinning. HubSpot’s documented decline from approximately 13.5 million monthly organic visits in November 2024 to under 2 million by January 2025 (an 86% loss across two months) coincided with Google’s December 2024 Core Update and parallel spam updates targeting scaled content patterns. HubSpot had high domain authority, peer-leading technical infrastructure, and a content engine producing at velocity. Authority did not insulate the volume against the algorithmic posture against templated AI-assisted content scaling.

CNET Money’s 2023 AI-generated content episode, Sports Illustrated’s AdVon Commerce content scandal, and Originality.AI’s 79,000-site deindexing study all point at the same algorithmic vector. The combination of YMYL category, scaled AI-assisted content, and inadequate authority signal is the empirical failure pattern of 2024-2026. The mid-sized RIA case I opened with is the same pattern in microcosm: heavy infrastructure investment, content velocity, insufficient authority foundation, indexing failure on a substantial fraction of the corpus.

Google’s January 2025 Search Quality Rater Guidelines update explicitly classified templated AI content as the “Lowest” quality category, and the December 2024 and March 2025 spam updates both targeted “scaled content abuse” patterns. The algorithmic vector over the last 18 months is consistently against high-velocity, low-authority content production in YMYL. Schema architecture is irrelevant to this vector. Authority is the variable that determines survival.

The Backlink Composition Test

There is a single diagnostic that captures the authority gap more cleanly than any individual signal: the composition of a site’s top-10 referring domains.

For RIA firms with strong organic positioning, the top-10 referring domain list is concentrated in financial press: Barron’s, WSJ, Forbes, Bloomberg, FT, CNBC, NerdWallet, Investopedia. For RIA firms that are still building authority, the top-10 referring domain list looks structurally different: industry directories, partner organizations, occasional press releases, syndicated content with no editorial selection. The mid-sized RIA case I opened with had a referring domain profile that fell into the second category despite the firm’s technical infrastructure investment.

The backlink composition is the empirical floor under the authority precedence pattern. AI engines and Google both use the referring domain composition as a citation prior for YMYL ranking and citation decisions. The composition is not a schema property. It is an outcome of years of PR, named-byline placement, awards, editorial relationships, and the slow accumulation of editorial citations that authority requires.

What This Implies for Investment

The strategic implication for any firm in a YMYL vertical is that technical SEO and authority signals are parallel investments, not substitutable ones. Cutting authority investment to fund more schema work does not produce more clicks. It produces a better-instrumented version of the same outcome.

The right shape of the investment, in our case-study engagement, looks like this:

- A bounded technical cleanup phase to close documented gaps (schema completeness, internal linking discipline, indexing rejection diagnostics, trademark cannibalization remediation). One-time work with a clear completion criterion.

- A lean ongoing operating model to maintain the technical infrastructure as a referral-validation surface. Cowork-driven monthly monitoring, low-level tactical execution budget, senior expert on-call. Not a content velocity engine.

- A parallel authority engine to address the actual gap. Financial-services PR retainer with named-feature pursuit. Awards and recognition submissions (Barron’s Top 100, Forbes Best-in-State). Podcast guesting on tier 1 advisory podcasts. Conference keynotes. Named-byline placement in tier 1 outlets.

- A pre-committed evaluation gate at a defined horizon (we used six months) with binary criteria. No “hold zone” for ambiguous outcomes. The gate’s job is to settle whether the authority investment is producing the trajectory the math requires.

The investment ratio matters. In the engagement I described, the authority engine carries roughly five times the budget of the lean SEO operating model. That is the inverse of where most firms in YMYL verticals start. Most firms start by overinvesting in the technical layer (because it is measurable and controllable) and underinvesting in the authority layer (because it is slow, expensive, and resists short-cycle metrics). The data from the engagement settled which investment carries the weight.

The Reframe

Technical SEO excellence is not a substitute for authority. It is a precondition for authority to compound. A firm with strong technical infrastructure and weak authority signals has built the floor of a building that has no superstructure. A firm with strong authority signals and weak technical infrastructure has a superstructure with no floor. Neither is sufficient. Both are necessary.

The cases where technical SEO investment produces outsized commercial returns are cases where the authority foundation already exists. Aspiriant’s fathom blog launched the same year the firm earned its first Barron’s Top 100 ranking. Brighton Jones hired a senior outside Chief Marketing Officer (CMO) in 2016 alongside their content investment scale-up, at year 17 of the firm’s life. Cresset’s PE-network credibility preceded their content. In every case, content scaled after authority arrived. Not before.

For an entrepreneur, an executive, or a firm leader evaluating technical SEO investment, the diagnostic question is not “Should we build schema?” The answer is always yes. The diagnostic question is “What does our authority foundation look like, and what is the parallel investment that will allow the technical work to compound?” Most firms have not asked themselves that second question. It is the more important one.

The discipline that pre-commits the answer is the same discipline I described in the companion post on threshold versus forecast framing for channel investments. Decide the criterion before the data lands. Then accept what the data tells you.

About the Author

Andrés Plashal

Senior Marketing Executive and Strategic Revenue & Search Marketing Engineer. $150M+ attributed revenue across 30+ companies. Google Partner since 2017.

Credentials: UIUC Gies College of Business (Behavioral Science), Columbia College Chicago (Interactive Arts & Media). Member: American Marketing Association, GAABS, Paid Search Association. Published researcher (SCTE/NCTA).