Crawled, Currently Not Indexed: Google's Quality Verdict on Your Content

What the Status Code Actually Says

The single most diagnostic single audit you can run on a content corpus is to pull Google Search Console URL Inspection data on a representative sample of your published URLs and read the status reasons Google reports. Of all the possible status reasons, “Crawled, currently not indexed” is the one that carries the most signal per character.

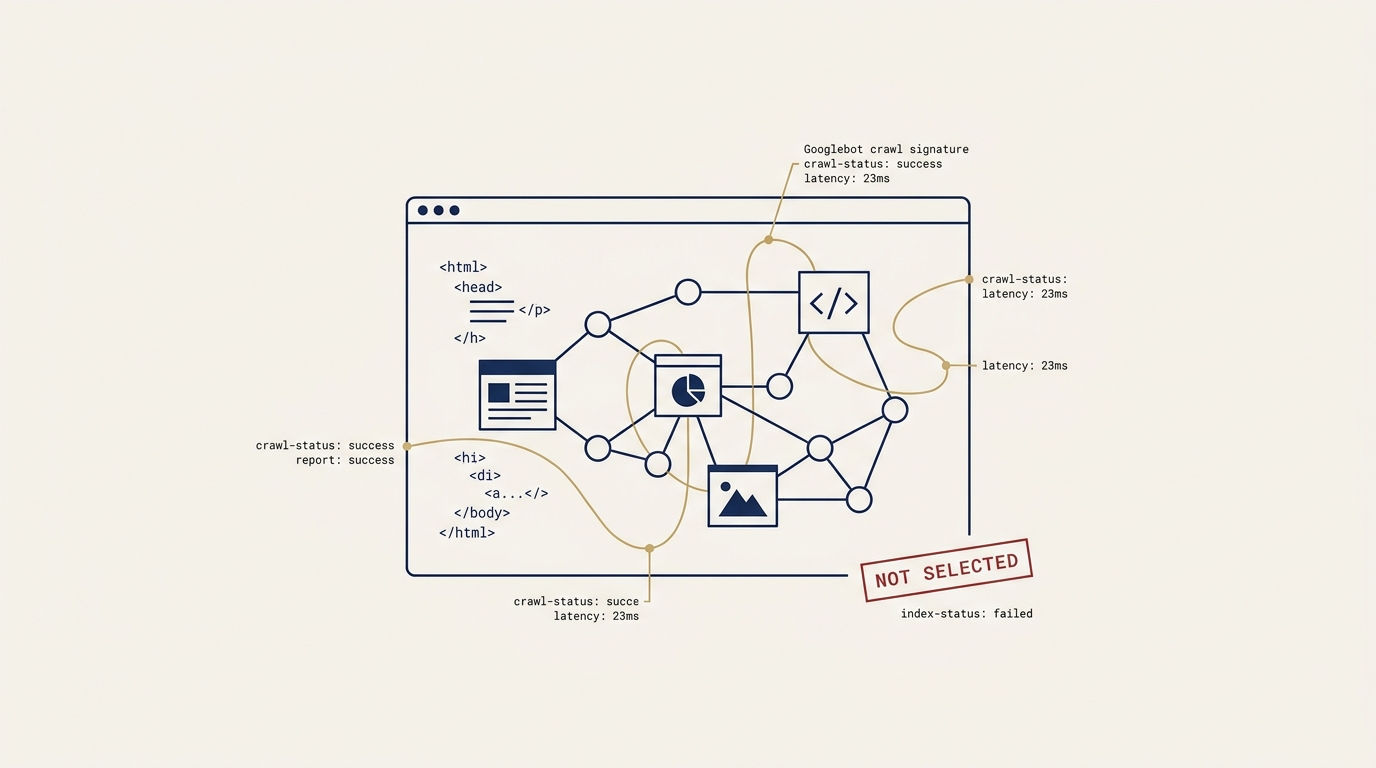

The status reason describes a precise sequence. Googlebot successfully reached the URL. The page fetched without error. Indexing was allowed by your robots directives and meta tags. The canonical declaration was correctly read. The rendering completed. Mobile usability was confirmed. Schema validated. And after all of that, Google evaluated the page and chose not to include it in the search index.

This is not a technical failure. The technical layer passed every check. This is Google’s quality assessment, made by an algorithmic system that has decided the content and the authority signals supporting it do not meet the threshold for index inclusion.

Why This Matters More Than Schema Findings

In a recent audit engagement I worked through for a mid-sized firm in a YMYL (Your Money or Your Life) category, the audit surfaced both schema findings (missing fields, malformed entity references, validation warnings) and indexing findings (a substantial fraction of the audited corpus showing “Crawled, currently not indexed” or its sibling statuses). The schema findings were addressable in hours per page. The indexing findings were the structural problem.

The reason the indexing finding carries more weight is that schema findings are corrective. They identify specific defects that, once fixed, restore eligibility. Indexing findings are evaluative. They communicate that Google’s algorithmic system, having seen everything you have published, has chosen not to include a fraction of it in the index. The remediation path is not “fix the missing field.” It is “make the content substantively better, or build the authority signals that lower the threshold to where your content can clear it.”

The Compounding Implication

Once a corpus has a non-trivial fraction of pages in the “Crawled, currently not indexed” status, adding more content does not fix the problem. It compounds it.

The mechanism is straightforward. Google’s algorithmic system uses signals from the corpus as a whole to set the eligibility threshold for new pages on the same domain. A corpus where 40% of audited pages have been rejected by the index communicates to Google that the domain’s content quality is below the threshold it sets for YMYL inclusion. New pages added to that corpus are evaluated against a threshold that the existing corpus has effectively raised.

The right remediation path is therefore inverse to the intuitive one. Stop adding new content. Triage the existing corpus into three buckets: republish with substantive quality improvements (and only then re-request indexing), retire formally with proper redirect handling, or maintain as-is. Pair the cleanup with parallel authority investment (PR, named-byline placement, awards) that lifts the domain-level authority signal. The corpus shrinks before it grows.

What “Substantive Quality Improvement” Actually Means

The phrase is vague enough to be useless in operations. Let me make it concrete.

A substantive quality improvement on a YMYL page typically includes:

- Named human author attribution with a real expertise claim (credentials, role at a regulated firm, named-byline publication history). Not a generic “Editorial Team” attribution.

- First-hand methodological contribution beyond what is available in the top-10 results for the target query. New analysis, original data, expert commentary, or synthesis that goes beyond aggregation of existing sources.

- Citation density to authoritative sources with active hyperlinks to primary documents (regulatory filings, peer-reviewed research, government data, named industry research). Not citations to other content marketing pieces.

- Substantive length increase if the page is below the depth threshold for the category, but only as a byproduct of items 1-3, not as a target in itself.

- Author credentials surfaced in the page schema via a Person entity linked by

@idto a published Author profile, not just a name in the byline.

Pages that receive all five and are then re-requested for inspection have a realistic chance of being reconsidered. Pages that receive a cosmetic refresh do not.

The Diagnostic Procedure

If you suspect this status is affecting your corpus, the procedure is:

- Pull URL Inspection data on a representative sample. At minimum 30-60 URLs covering pillar pages, sub-pillars or category hubs, and a random sample of blog posts. The sample needs to be statistically meaningful, not cherry-picked from your best content.

- Bucket the URLs by status reason. “Indexed without issues,” “Indexed with issues,” “Crawled, currently not indexed,” “Discovered, currently not indexed,” “URL is unknown to Google,” “Soft 404,” and any others that surface.

- Calculate the cleanly-indexed percentage. A healthy corpus in a low-YMYL category targets 80% or above. A YMYL corpus that is below 50% is in structural trouble.

- Read the cleanly-indexed cohort. What is different about the pages that cleared the threshold? Author attribution? Length? Topic specificity? Citation density? The pattern is your eligibility signal.

- Triage the unindexed cohort against the cleanly-indexed pattern. This becomes your remediation plan.

The procedure is not difficult. It takes a competent Search Engine Optimization (SEO) analyst a day or two for a corpus of 100-150 URLs. The result is the most actionable audit output most YMYL sites have access to, and it is sitting in Search Console, unread, on a substantial majority of the sites I have ever inspected.

The Discipline

Most SEO conversations focus on rankings, traffic, and conversions. Those are output metrics. Indexing is the precondition for any of them. A page that is not indexed cannot rank, cannot generate traffic, and cannot convert. A corpus where indexing is failing at scale is a corpus where every downstream metric is mechanically capped, regardless of how much content you produce, how good your keyword research is, or how much you spend on link building.

The discipline of reading indexing data first, and treating it as Google’s primary quality verdict on your work, is the single most underrated SEO practice I encounter. It is also the one that produces the highest-value diagnostic in the shortest time, on the most important variable, for the lowest cost. If you have not pulled a URL Inspection sample on your corpus in the last six months, that is the work that comes before the work you are planning to do this quarter.

About the Author

Andrés Plashal

Senior Marketing Executive and Strategic Revenue & Search Marketing Engineer. $150M+ attributed revenue across 30+ companies. Google Partner since 2017.

Credentials: UIUC Gies College of Business (Behavioral Science), Columbia College Chicago (Interactive Arts & Media). Member: American Marketing Association, GAABS, Paid Search Association. Published researcher (SCTE/NCTA).